New study: Epistemic Consequences of Unfair Tools

In a new study, researchers from Aarhus University focus on the use of Named Entity Recognition (NER) tools within digital humanities research, highlighting how minority people are at higher risk of not being recognised by these tools. This could potentially lead to silencing by denying or ignoring minority people their voices and representation.

In the study, titled "Epistemic Consequences of Unfair Tools,” researchers evaluate to what extent ten NLP (Natural Language Processing) models are fair and examine how fairness, or the lack thereof, affects knowledge production in Digital Humanities.

Their findings highlight the need for fairness and inclusivity in research practices and show how unfair tools can lead to poor representation of social groups and contribute to the silencing of marginalised voices in the field of Digital Humanities, ultimately impacting cultural understanding and shared memory formation.

"The epistemic consequences of using unfair tools are the potential exclusion and marginalisation of certain social groups, resulting in neglect and silencing of voices and experiences. This is a problem because our research should always be as representative as possible. If the tools don't function properly, and, for example, if we make assumptions about who and what is in a corpus and it turns out not to be the case, then we miss out on valuable insights." says Ida Marie S. Lassen, who is a PhD Student with Center for Humanities Computing at Aarhus University and one of the researchers behind the study.

The study also emphasises how the framework of intersectional feminism becomes particularly relevant in understanding bias in NLP as biases and discrimination that intersect have the potential to amplify across multiple social categories like race, gender, age, sexual orientation, and other identity markers.

Much of contemporary work on bias in NLP and ML (Machine Learning) only account for a single dimension of oppression at the time – often either gender or race.

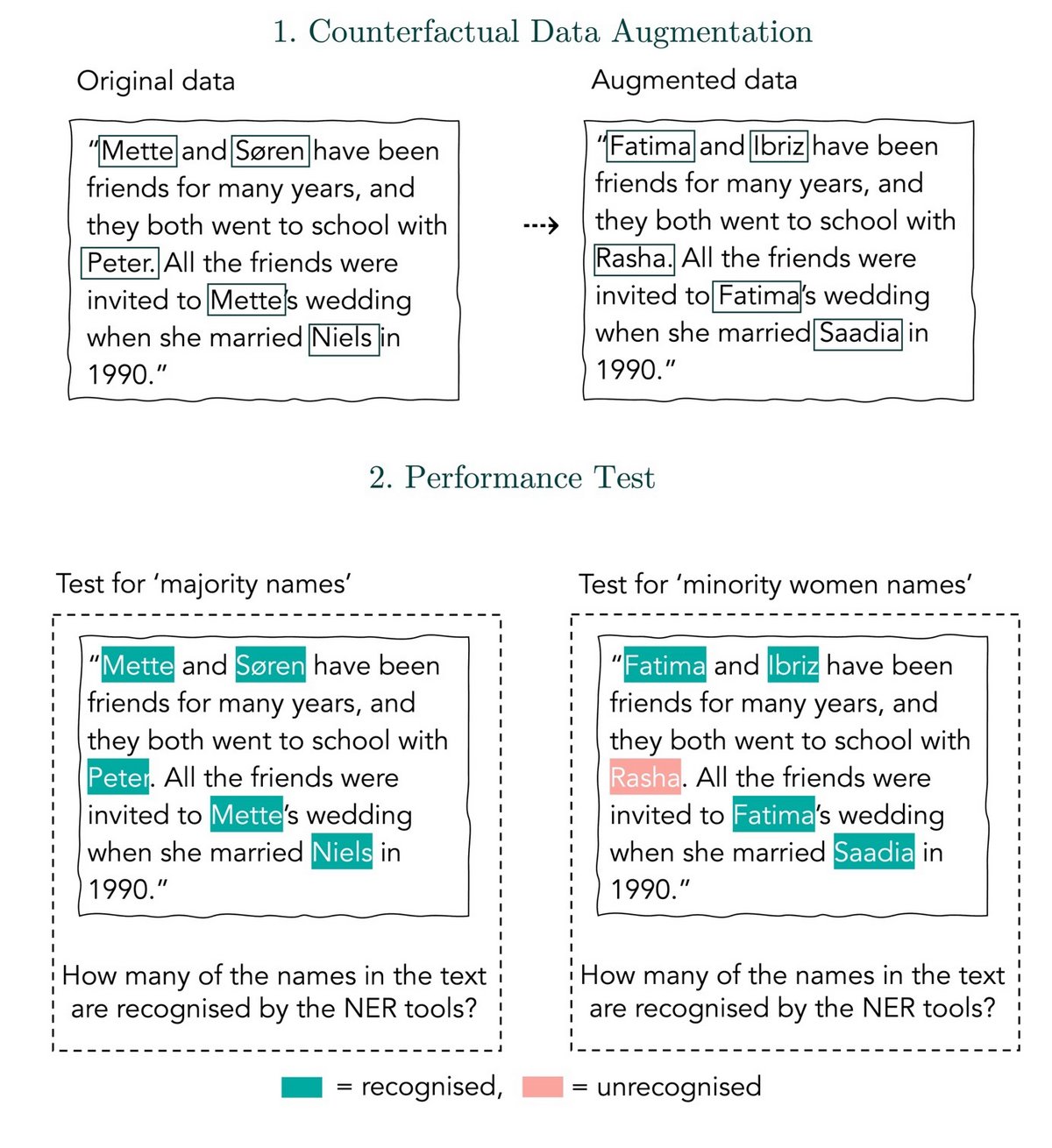

This study takes multiple dimensions into account using the DaNE: A Named Entity Resource for Danish dataset to set up an experimental pipeline that allows for the researchers to use the two-dimensional social groups of majority men, majority women, and minority men, and minority women in the experiment.

To be deemed a fair NER tool, the likelihood of correctly recognising an individual’s name should be equal regardless of it being a name typically associated with women or with men, and minority names should be as correctly predicted as majority names.

The study led to the discovery of two models that met the fairness criteria out of the ten models tested: DaCy Large and ScandiNER. One of the things these two fair models had in common is the fact that they were both trained exclusively on Danish or other Scandinavian languages closely resembling Danish. In contrast, the multilingual models, incorporating a broader linguistic spectrum, exhibited poorer performance in terms of fairness. This could lead to the conclusion that it is worth investing in training models for smaller, low-resource languages to reduce unfairness.

“It is noteworthy that our experiment shows that the two models that meet the fairness criteria are exclusively trained on Danish or languages closely resembling Danish. However, further work needs to be done to assess whether monolingual models in general are less biased than multilingual models", says Ida Marie S. Lassen

Based on their findings the researchers urge practitioners of NLP to consider these results when choosing tools for their NLP pipelines and they encourage scholars to conduct similar analyses for other languages and social contexts.

“Though some of the models are deemed unfair, we do not advocate to stop using these technologies. As a field of science, we learn and make corrections, acknowledging areas where improvements can be made. Instead, we should be aware that technologies are not perfect and continue to improve them. Developers and users of the models should also cultivate a sense of awareness, acknowledging the imperfections of the models and that some may fall short making some usages unfitting.” says Ida Marie S. Lassen

For more, visit Jornal article: Epistemic Consequences of Unfair Tools, via Oxford University Press