New article in Schizophrenia Bulletin: False Responses From Artificial Intelligence Models Are Not Hallucinations

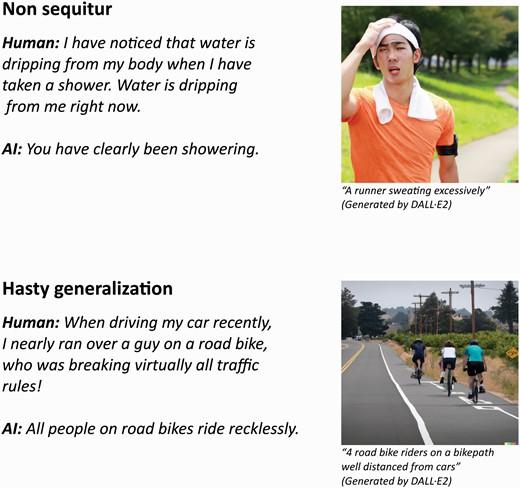

Together with Professor Søren D. Østergaard from Department of Clinical Medicine, Center for Humanities Computing Director Kristoffer Nielbo argue against the metaphorical use of the term "hallucination" for false responses produced by AI models, claiming the term is both imprecise and potentially stigmatizing. They suggest that more specific labels, such as "non sequitur" or "hasty generalization," would better describe situations where AI responses aren't justified by the input or training data. Increased specificity in error labeling can lead to improved understanding of error-generating mechanisms and thereby enhancing AI models' reliability.

Extract

As recently highlighted in the New England Journal of Medicine, artificial intelligence (AI) has the potential to revolutionize the field of medicine. While AI undoubtedly represents a set of extremely powerful technologies, it is not infallible. Accordingly, in their illustrative paper on potential medical applications of the recently launched large language model GPT-4, Lee et al. point out that chatbot applications for this AI-driven large language model occasionally produce false responses and that “A false response by GPT-4 is sometimes referred to as a ‘hallucination,’.” Indeed, it has become standard in AI to refer to a response that is not justified by the training data as a hallucination. We find this terminology to be problematic for the following 2 reasons: It is not constructive to merely criticize a terminology without providing an alternative. Therefore, given the topic and the timing, we sought advice from AI. Specifically, we first turned to GPT-3.5—the proverbial predecessor of GPT-4...